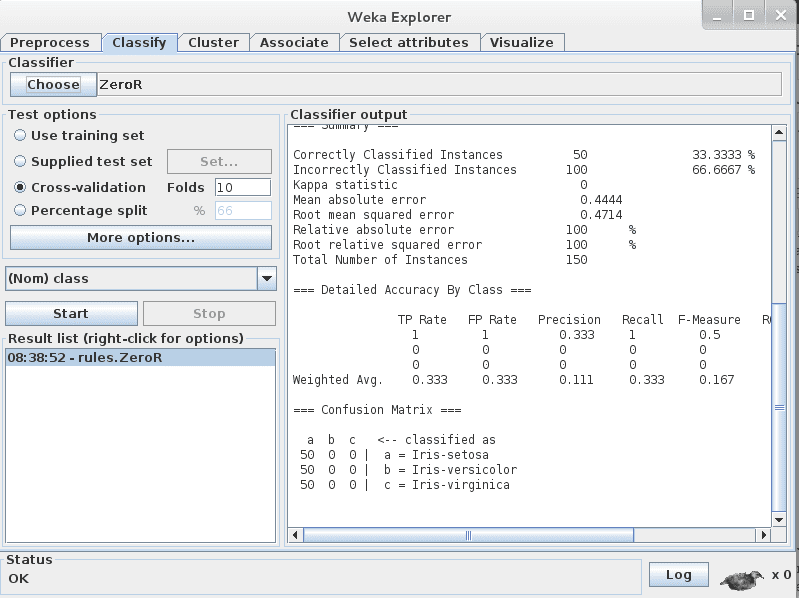

The outer loop of cross-validation, as run by the Explorer or command line, estimates the performance of the model on future (as yet unseen) data. I don't think you understand the purpose of cross-validation here. What's its utility when it only saves a product of some bare scheme 1st iteration model? How do I save a Final model reflecting the Complete construct? Java -x 4 -v -o -t dingus.arff -T dingus.arff -d stored4.model -V 30 -M 0.2 -L 0.1 &Īnd all the stored models are Exactly the Same, I really do not understand how -d works. Java -no-cv -v -o -t dingus.arff -T dingus.arff -d stored3.model -V 30 -M 0.2 -L 0.1 & Java -v -o -t dingus.arff -d stored2.model -V 30 -M 0.2 -L 0.1 & Java -v -o -t dingus.arff -T dingus.arff -d stored1.model -V 30 -M 0.2 -L 0.1 & I think I did not understand your explanation of how -d works. from x validation I need to always use also -T together with -t? Wow! So the model exported with -d is not the one with optimized parameters on a cross validation or a split data scheme,it is always just 1 fold with its parameters optimized only on this one fold? And in order to get the final model benefiting e.g.

Just use a bogus test set (or the training data for testing) if all you are interested in is getting the model saved. So, if you specify 5 fold cross-validation a model is first built on all the data (and saved via -d) and then the cross-validation is performed.

When -d is used to save a model from the command line, it is always the model built on all the data (regardless of evaluation method selected). The scheme is not aware of the evaluation procedure, it is just presented with training data via its buildClassifier() method. So, for example, the -V option will set aside some percentage of the training data to use for internal validation of the network - if the training data happens to be a cross-validation fold, then some percentage of this will be held out. If a classifier does it's own validation thing with a test set then it is an inner validation on every outer cross-validation fold presented to the classifier via its buildClassifier() method. 10 fold cross-validation is the default if a test set (or percentage split) option is not specified. General options are lowercase and scheme-specific options will be uppercase for all single character options (such as -V for MLP) and can be lowercase for multi-character options (the help will show which are scheme-specific). x is a general option applicable to all classifiers (use -h to see all general and scheme-specific options).